For most employees at ConSol, today is the last day before their Christmas vacation. Eight of us took that opportunity and organized our first FedEx day:

During the full day event, we formed small teams and worked on innovative projects we are enthusiastic about.

At the end of the day, we had small presentations showing the results to the company.

In this blog post we’d like to share the projects we came up with:

Infinispan Cluster on Raspberry Pis

There seem to be a lot of interest in building Raspberry Pi clusters for

demo

projects.

One of the teams took the chance and built our own, with five Pis running an Infinispan distributed cache.

It turns out that having a real hardware cluster yields different results than testing Infinispan locally.

While clean shutdowns and startups are no problem, unplugging and plugging network cables is a much greater challange to the Infinispan infrastructure.

The Raspberry Pi hardware is sufficient to run embedded Infinispan instances, the JBoss based distributions don’t seem to fit well with the hardware.

Kiosk systems based on Raspberry Pis

The Raspberry Pi and a large screen is all that is needed for building an information kiosk.

One of the teams built a kiosk for our entrance hall, showing the current event schedule for our meeting rooms.

Access to the event database was implemented as a Spring application, on the front-end side

HTML5 and JavaScript magic was used to visualize the data.

Evaluating the Ceylon Programming Language

Ceylon 1.0.0 was released recently, and one of the teams took the chance to make some first experiences with the new programming language.

Ceylon runs on the JVM, and can also be compiled to JavaScript. It comes with an Eclipse-based IDE, which is, however, not very easy to run.

The strong type system enables a lot of tool support, but sometimes also results in errors that are hard to understand for the novice.

Video Recordings for the ConSol Academy

The ConSol academy is a company event where employees share their knowledge with their peers. One team used the FedEx day to build

a prototypical hardware for recording academy talks on video, to archive the talks for colleagues who cannot participate.

As most other project, the video recording hardware was also based on the Raspberry Pi.

The Pi was equipped with a small camera and a microphone, and streams the data over the network for recording.

Summary

The Raspberry Pi is currently the most popular thing

among our developers. It is easy to set up, and provides an open platform for a wide range of projects.

The FedEx day was a great opportunity to experiment with that, and it is also a good way to get together with colleagues who work in other projects.

]]>

-->

-->

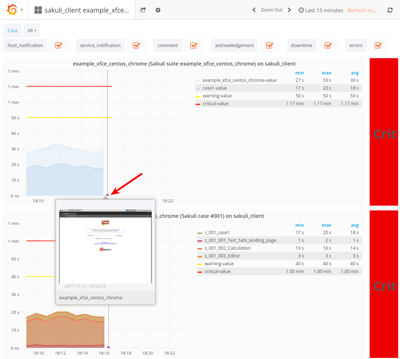

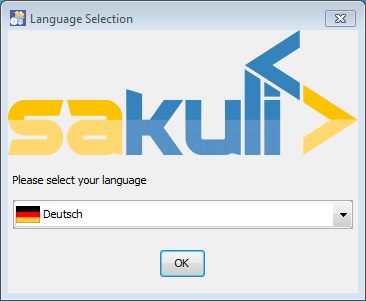

Der Ausfall eines Monitoring-Servers ist an sich ist nicht produktionskritisch. Trotzdem stellt er eine Gefahr dar. Ohne Monitoring befindet sich der Betrieb im Blindflug. Fehler, die Geld kosten oder Kunden verärgern bleiben unentdeckt. Matthias Gallinger hat sich Gedanken gemacht, wie man einen möglichst unterbrechungsfreien Monitoringbetrieb sicherstellt. Dabei achtete er darauf, dieses Ziel mit simpelsten Mitteln zu erreichen. Mit der vorgestellten Lösung bleibt Admins das Erlernen und Bedienen von komplexen Cluster-Frameworks erspart.

]]>

Der Ausfall eines Monitoring-Servers ist an sich ist nicht produktionskritisch. Trotzdem stellt er eine Gefahr dar. Ohne Monitoring befindet sich der Betrieb im Blindflug. Fehler, die Geld kosten oder Kunden verärgern bleiben unentdeckt. Matthias Gallinger hat sich Gedanken gemacht, wie man einen möglichst unterbrechungsfreien Monitoringbetrieb sicherstellt. Dabei achtete er darauf, dieses Ziel mit simpelsten Mitteln zu erreichen. Mit der vorgestellten Lösung bleibt Admins das Erlernen und Bedienen von komplexen Cluster-Frameworks erspart.

]]>

The developer team of OMD (Open Monitoring Distribution) released the version 1.10 today.

The developer team of OMD (Open Monitoring Distribution) released the version 1.10 today.

Im Februar bestellt und in der letzten Mai-Woche eingetroffen. Die intelligenten Steckdosen von AVM scheinen heiss begehrt zu sein. Jedenfalls kann ich jetzt über meine FRITZ!BOX aufzeichnen, wieviel Strom gewisse Geräte momentan oder aber über einen langen Zeitraum verbrauchen.

Im Februar bestellt und in der letzten Mai-Woche eingetroffen. Die intelligenten Steckdosen von AVM scheinen heiss begehrt zu sein. Jedenfalls kann ich jetzt über meine FRITZ!BOX aufzeichnen, wieviel Strom gewisse Geräte momentan oder aber über einen langen Zeitraum verbrauchen.

Für alle Freunde des leichtgewichtigen JMX Monitorings findet sich in der neuesten Ausgabe des Perl Magazins eine Vorstellung von jmx4perl. In dem 9-seitigen Artikel wird JMX im Allgemeinen, die jxm4perl (bzw. Jolokia) Agenten und die Programmierung mit

Für alle Freunde des leichtgewichtigen JMX Monitorings findet sich in der neuesten Ausgabe des Perl Magazins eine Vorstellung von jmx4perl. In dem 9-seitigen Artikel wird JMX im Allgemeinen, die jxm4perl (bzw. Jolokia) Agenten und die Programmierung mit